-

Source Camera Identification Techniques: A Survey

Source Camera Identification Techniques: A Survey -

Point Projection Mapping System for Tracking, Registering, Labeling, and Validating Optical Tissue Measurements

Point Projection Mapping System for Tracking, Registering, Labeling, and Validating Optical Tissue Measurements -

Identifying the Causes of Unexplained Dyspnea at High Altitude Using Normobaric Hypoxia with Echocardiography

Identifying the Causes of Unexplained Dyspnea at High Altitude Using Normobaric Hypoxia with Echocardiography -

Fast Data Generation for Training Deep-Learning 3D Reconstruction Approaches for Camera Arrays

Fast Data Generation for Training Deep-Learning 3D Reconstruction Approaches for Camera Arrays

Journal Description

Journal of Imaging

Journal of Imaging

is an international, multi/interdisciplinary, peer-reviewed, open access journal of imaging techniques published online monthly by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), PubMed, PMC, dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Graphics and Computer-Aided Design)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21.7 days after submission; acceptance to publication is undertaken in 3.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.2 (2022);

5-Year Impact Factor:

3.2 (2022)

Latest Articles

Rock Slope Stability Analysis Using Terrestrial Photogrammetry and Virtual Reality on Ignimbritic Deposits

J. Imaging 2024, 10(5), 106; https://doi.org/10.3390/jimaging10050106 (registering DOI) - 28 Apr 2024

Abstract

Puerto de Cajas serves as a vital high-altitude passage in Ecuador, connecting the coastal region to the city of Cuenca. The stability of this rocky massif is carefully managed through the assessment of blocks and discontinuities, ensuring safe travel. This study presents a

[...] Read more.

Puerto de Cajas serves as a vital high-altitude passage in Ecuador, connecting the coastal region to the city of Cuenca. The stability of this rocky massif is carefully managed through the assessment of blocks and discontinuities, ensuring safe travel. This study presents a novel approach, employing rapid and cost-effective methods to evaluate an unexplored area within the protected expanse of Cajas. Using terrestrial photogrammetry and strategically positioned geomechanical stations along the slopes, we generated a detailed point cloud capturing elusive terrain features. We have used terrestrial photogrammetry for digitalization of the slope. Validation of the collected data was achieved by comparing directional data from Cloud Compare software with manual readings using a digital compass integrated in a phone at control points. The analysis encompasses three slopes, employing the SMR, Q-slope, and kinematic methodologies. Results from the SMR system closely align with kinematic analysis, indicating satisfactory slope quality. Nonetheless, continued vigilance in stability control remains imperative for ensuring road safety and preserving the site’s integrity. Moreover, this research lays the groundwork for the creation of a publicly accessible 3D repository, enhancing visualization capabilities through Google Virtual Reality. This initiative not only aids in replicating the findings but also facilitates access to an augmented reality environment, thereby fostering collaborative research endeavors.

Full article

(This article belongs to the Special Issue Exploring Challenges and Innovations in 3D Point Cloud Processing)

►

Show Figures

Open AccessArticle

Comparative Analysis of Color Space and Channel, Detector, and Descriptor for Feature-Based Image Registration

by

Wenan Yuan, Sai Raghavendra Prasad Poosa and Rutger Francisco Dirks

J. Imaging 2024, 10(5), 105; https://doi.org/10.3390/jimaging10050105 (registering DOI) - 28 Apr 2024

Abstract

The current study aimed to quantify the value of color spaces and channels as a potential superior replacement for standard grayscale images, as well as the relative performance of open-source detectors and descriptors for general feature-based image registration purposes, based on a large

[...] Read more.

The current study aimed to quantify the value of color spaces and channels as a potential superior replacement for standard grayscale images, as well as the relative performance of open-source detectors and descriptors for general feature-based image registration purposes, based on a large benchmark dataset. The public dataset UDIS-D, with 1106 diverse image pairs, was selected. In total, 21 color spaces or channels including RGB, XYZ, Y′CrCb, HLS, L*a*b* and their corresponding channels in addition to grayscale, nine feature detectors including AKAZE, BRISK, CSE, FAST, HL, KAZE, ORB, SIFT, and TBMR, and 11 feature descriptors including AKAZE, BB, BRIEF, BRISK, DAISY, FREAK, KAZE, LATCH, ORB, SIFT, and VGG were evaluated according to reprojection error (RE), root mean square error (RMSE), structural similarity index measure (SSIM), registration failure rate, and feature number, based on 1,950,984 image registrations. No meaningful benefits from color space or channel were observed, although XYZ, RGB color space and L* color channel were able to outperform grayscale by a very minor margin. Per the dataset, the best-performing color space or channel, detector, and descriptor were XYZ/RGB, SIFT/FAST, and AKAZE. The most robust color space or channel, detector, and descriptor were L*a*b*, TBMR, and VGG. The color channel, detector, and descriptor with the most initial detector features and final homography features were Z/L*, FAST, and KAZE. In terms of the best overall unfailing combinations, XYZ/RGB+SIFT/FAST+VGG/SIFT seemed to provide the highest image registration quality, while Z+FAST+VGG provided the most image features.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Vertebral and Femoral Bone Mineral Density (BMD) Assessment with Dual-Energy CT versus DXA Scan in Postmenopausal Females

by

Luca Pio Stoppino, Stefano Piscone, Sara Saccone, Saul Alberto Ciccarelli, Luca Marinelli, Paola Milillo, Crescenzio Gallo, Luca Macarini and Roberta Vinci

J. Imaging 2024, 10(5), 104; https://doi.org/10.3390/jimaging10050104 (registering DOI) - 27 Apr 2024

Abstract

This study aimed to demonstrate the potential role of dual-energy CT in assessing bone mineral density (BMD) using hydroxyapatite–fat material pairing in postmenopausal women. A retrospective study was conducted on 51 postmenopausal female patients who underwent DXA and DECT examinations for other clinical

[...] Read more.

This study aimed to demonstrate the potential role of dual-energy CT in assessing bone mineral density (BMD) using hydroxyapatite–fat material pairing in postmenopausal women. A retrospective study was conducted on 51 postmenopausal female patients who underwent DXA and DECT examinations for other clinical reasons. DECT images were acquired with spectral imaging using a 256-slice system. These images were processed and visualized using a HAP–fat material pair. Statistical analysis was performed using the Bland–Altman method to assess the agreement between DXA and DECT HAP–fat measurements. Mean BMD, vertebral, and femoral T-scores were obtained. For vertebral analysis, the Bland–Altman plot showed an inverse correlation (R2: −0.042; RMSE: 0.690) between T-scores and DECT HAP–fat values for measurements from L1 to L4, while a good linear correlation (R2: 0.341; RMSE: 0.589) was found for measurements at the femoral neck. In conclusion, we demonstrate the enhanced importance of BMD calculation through DECT, finding a statistically significant correlation only at the femoral neck where BMD results do not seem to be influenced by the overlap of the measurements on cortical and trabecular bone. This outcome could be beneficial in the future by reducing radiation exposure for patients already undergoing follow-up for chronic conditions.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

A Multi-Modal Foundation Model to Assist People with Blindness and Low Vision in Environmental Interaction

by

Yu Hao, Fan Yang, Hao Huang, Shuaihang Yuan, Sundeep Rangan, John-Ross Rizzo, Yao Wang and Yi Fang

J. Imaging 2024, 10(5), 103; https://doi.org/10.3390/jimaging10050103 - 26 Apr 2024

Abstract

People with blindness and low vision (pBLV) encounter substantial challenges when it comes to comprehensive scene recognition and precise object identification in unfamiliar environments. Additionally, due to the vision loss, pBLV have difficulty in accessing and identifying potential tripping hazards independently. Previous assistive

[...] Read more.

People with blindness and low vision (pBLV) encounter substantial challenges when it comes to comprehensive scene recognition and precise object identification in unfamiliar environments. Additionally, due to the vision loss, pBLV have difficulty in accessing and identifying potential tripping hazards independently. Previous assistive technologies for the visually impaired often struggle in real-world scenarios due to the need for constant training and lack of robustness, which limits their effectiveness, especially in dynamic and unfamiliar environments, where accurate and efficient perception is crucial. Therefore, we frame our research question in this paper as: How can we assist pBLV in recognizing scenes, identifying objects, and detecting potential tripping hazards in unfamiliar environments, where existing assistive technologies often falter due to their lack of robustness? We hypothesize that by leveraging large pretrained foundation models and prompt engineering, we can create a system that effectively addresses the challenges faced by pBLV in unfamiliar environments. Motivated by the prevalence of large pretrained foundation models, particularly in assistive robotics applications, due to their accurate perception and robust contextual understanding in real-world scenarios induced by extensive pretraining, we present a pioneering approach that leverages foundation models to enhance visual perception for pBLV, offering detailed and comprehensive descriptions of the surrounding environment and providing warnings about potential risks. Specifically, our method begins by leveraging a large-image tagging model (i.e., Recognize Anything Model (RAM)) to identify all common objects present in the captured images. The recognition results and user query are then integrated into a prompt, tailored specifically for pBLV, using prompt engineering. By combining the prompt and input image, a vision-language foundation model (i.e., InstructBLIP) generates detailed and comprehensive descriptions of the environment and identifies potential risks in the environment by analyzing environmental objects and scenic landmarks, relevant to the prompt. We evaluate our approach through experiments conducted on both indoor and outdoor datasets. Our results demonstrate that our method can recognize objects accurately and provide insightful descriptions and analysis of the environment for pBLV.

Full article

(This article belongs to the Special Issue Image and Video Processing for Blind and Visually Impaired)

Open AccessArticle

Precision Agriculture: Computer Vision-Enabled Sugarcane Plant Counting in the Tillering Phase

by

Muhammad Talha Ubaid and Sameena Javaid

J. Imaging 2024, 10(5), 102; https://doi.org/10.3390/jimaging10050102 - 26 Apr 2024

Abstract

The world’s most significant yield by production quantity is sugarcane. It is the primary source for sugar, ethanol, chipboards, paper, barrages, and confectionery. Many people are affiliated with sugarcane production and their products around the globe. The sugarcane industries make an agreement with

[...] Read more.

The world’s most significant yield by production quantity is sugarcane. It is the primary source for sugar, ethanol, chipboards, paper, barrages, and confectionery. Many people are affiliated with sugarcane production and their products around the globe. The sugarcane industries make an agreement with farmers before the tillering phase of plants. Industries are keen on knowing the sugarcane field’s pre-harvest estimation for planning their production and purchases. The proposed research contribution is twofold: by publishing our newly developed dataset, we also present a methodology to estimate the number of sugarcane plants in the tillering phase. The dataset has been obtained from sugarcane fields in the fall season. In this work, a modified architecture of Faster R-CNN with feature extraction using VGG-16 with Inception-v3 modules and sigmoid threshold function has been proposed for the detection and classification of sugarcane plants. Significantly promising results with 82.10% accuracy have been obtained with the proposed architecture, showing the viability of the developed methodology.

Full article

(This article belongs to the Special Issue Imaging Applications in Agriculture)

Open AccessArticle

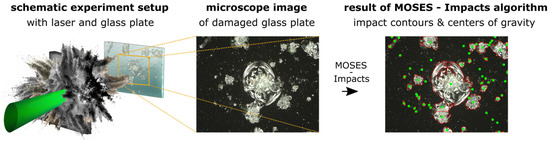

Separation and Analysis of Connected, Micrometer-Sized, High-Frequency Damage on Glass Plates due to Laser-Accelerated Material Fragments in Vacuum

by

Sabrina Pietzsch, Sebastian Wollny and Paul Grimm

J. Imaging 2024, 10(5), 101; https://doi.org/10.3390/jimaging10050101 - 26 Apr 2024

Abstract

In this paper, we present a new processing method, called MOSES—Impacts, for the detection of micrometer-sized damage on glass plate surfaces. It extends existing methods by a separation of damaged areas, called impacts, to support state-of-the-art recycling systems in optimizing their parameters. These

[...] Read more.

In this paper, we present a new processing method, called MOSES—Impacts, for the detection of micrometer-sized damage on glass plate surfaces. It extends existing methods by a separation of damaged areas, called impacts, to support state-of-the-art recycling systems in optimizing their parameters. These recycling systems are used to repair process-related damages on glass plate surfaces, caused by accelerated material fragments, which arise during a laser–matter interaction in a vacuum. Due to a high number of impacts, the presented MOSES—Impacts algorithm focuses on the separation of connected impacts in two-dimensional images. This separation is crucial for the extraction of relevant features such as centers of gravity and radii of impacts, which are used as recycling parameters. The results show that the MOSES—Impacts algorithm effectively separates impacts, achieves a mean agreement with human users of (82.0 ± 2.0)%, and improves the recycling of glass plate surfaces by identifying around 7% of glass plate surface area as being not in need of repair compared to existing methods.

Full article

(This article belongs to the Section Computer Vision and Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessArticle

DepthCrackNet: A Deep Learning Model for Automatic Pavement Crack Detection

by

Alireza Saberironaghi and Jing Ren

J. Imaging 2024, 10(5), 100; https://doi.org/10.3390/jimaging10050100 - 26 Apr 2024

Abstract

Detecting cracks in the pavement is a vital component of ensuring road safety. Since manual identification of these cracks can be time-consuming, an automated method is needed to speed up this process. However, creating such a system is challenging due to factors including

[...] Read more.

Detecting cracks in the pavement is a vital component of ensuring road safety. Since manual identification of these cracks can be time-consuming, an automated method is needed to speed up this process. However, creating such a system is challenging due to factors including crack variability, variations in pavement materials, and the occurrence of miscellaneous objects and anomalies on the pavement. Motivated by the latest progress in deep learning applied to computer vision, we propose an effective U-Net-shaped model named DepthCrackNet. Our model employs the Double Convolution Encoder (DCE), composed of a sequence of convolution layers, for robust feature extraction while keeping parameters optimally efficient. We have incorporated the TriInput Multi-Head Spatial Attention (TMSA) module into our model; in this module, each head operates independently, capturing various spatial relationships and boosting the extraction of rich contextual information. Furthermore, DepthCrackNet employs the Spatial Depth Enhancer (SDE) module, specifically designed to augment the feature extraction capabilities of our segmentation model. The performance of the DepthCrackNet was evaluated on two public crack datasets: Crack500 and DeepCrack. In our experimental studies, the network achieved mIoU scores of 77.0% and 83.9% with the Crack500 and DeepCrack datasets, respectively.

Full article

(This article belongs to the Special Issue Deep Learning in Image Analysis: Progress and Challenges)

►▼

Show Figures

Figure 1

Open AccessArticle

Radiological Comparison of Canal Fill between Collared and Non-Collared Femoral Stems: A Two-Year Follow-Up after Total Hip Arthroplasty

by

Itay Ashkenazi, Amit Benady, Shlomi Ben Zaken, Shai Factor, Mohamed Abadi, Ittai Shichman, Samuel Morgan, Aviram Gold, Nimrod Snir and Yaniv Warschawski

J. Imaging 2024, 10(5), 99; https://doi.org/10.3390/jimaging10050099 (registering DOI) - 25 Apr 2024

Abstract

Introduction: Collared femoral stems in total hip arthroplasty (THA) offer reduced subsidence and periprosthetic fractures but raise concerns about fit accuracy and stem sizing. This study compares collared and non-collared stems to assess the stem–canal fill ratio (CFR) and fixation indicators, aiming to

[...] Read more.

Introduction: Collared femoral stems in total hip arthroplasty (THA) offer reduced subsidence and periprosthetic fractures but raise concerns about fit accuracy and stem sizing. This study compares collared and non-collared stems to assess the stem–canal fill ratio (CFR) and fixation indicators, aiming to guide implant selection and enhance THA outcomes. Methods: This retrospective single-center study examined primary THA patients who received Corail cementless stems between August 2015 and October 2020, with a minimum of two years of radiological follow-up. The study compared preoperative bone quality assessments, including the Dorr classification, the canal flare index (CFI), the morphological cortical index (MCI), and the canal bone ratio (CBR), as well as postoperative radiographic evaluations, such as the CFR and component fixation, between patients who received a collared or a non-collared femoral stem. Results: The study analyzed 202 THAs, with 103 in the collared cohort and 99 in the non-collared cohort. Patients’ demographics showed differences in age (p = 0.02) and ASA classification (p = 0.01) but similar preoperative bone quality between groups, as suggested by the Dorr classification (p = 0.15), CFI (p = 0.12), MCI (p = 0.26), and CBR (p = 0.50). At the two-year follow-up, femoral stem CFRs (p = 0.59 and p = 0.27) were comparable between collared and non-collared cohorts. Subsidence rates were almost doubled for non-collared patients (19.2 vs. 11.7%, p = 0.17), however, not to a level of clinical significance. Conclusion: The findings of this study show that both collared and non-collared Corail stems produce comparable outcomes in terms of the CFR and radiographic indicators for stem fixation. These findings reduce concerns about stem under-sizing and micro-motion in collared stems. While this study provides insights into the collar design debate in THA, further research remains necessary.

Full article

(This article belongs to the Section Medical Imaging)

Open AccessArticle

Derivative-Free Iterative One-Step Reconstruction for Multispectral CT

by

Thomas Prohaszka, Lukas Neumann and Markus Haltmeier

J. Imaging 2024, 10(5), 98; https://doi.org/10.3390/jimaging10050098 (registering DOI) - 24 Apr 2024

Abstract

Image reconstruction in multispectral computed tomography (MSCT) requires solving a challenging nonlinear inverse problem, commonly tackled via iterative optimization algorithms. Existing methods necessitate computing the derivative of the forward map and potentially its regularized inverse. In this work, we present a simple yet

[...] Read more.

Image reconstruction in multispectral computed tomography (MSCT) requires solving a challenging nonlinear inverse problem, commonly tackled via iterative optimization algorithms. Existing methods necessitate computing the derivative of the forward map and potentially its regularized inverse. In this work, we present a simple yet highly effective algorithm for MSCT image reconstruction, utilizing iterative update mechanisms that leverage the full forward model in the forward step and a derivative-free adjoint problem. Our approach demonstrates both fast convergence and superior performance compared to existing algorithms, making it an interesting candidate for future work. We also discuss further generalizations of our method and its combination with additional regularization and other data discrepancy terms.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

Open AccessArticle

SVD-Based Mind-Wandering Prediction from Facial Videos in Online Learning

by

Nguy Thi Lan Anh, Nguyen Gia Bach, Nguyen Thi Thanh Tu, Eiji Kamioka and Phan Xuan Tan

J. Imaging 2024, 10(5), 97; https://doi.org/10.3390/jimaging10050097 (registering DOI) - 24 Apr 2024

Abstract

This paper presents a novel approach to mind-wandering prediction in the context of webcam-based online learning. We implemented a Singular Value Decomposition (SVD)-based 1D temporal eye-signal extraction method, which relies solely on eye landmark detection and eliminates the need for gaze tracking or

[...] Read more.

This paper presents a novel approach to mind-wandering prediction in the context of webcam-based online learning. We implemented a Singular Value Decomposition (SVD)-based 1D temporal eye-signal extraction method, which relies solely on eye landmark detection and eliminates the need for gaze tracking or specialized hardware, then extract suitable features from the signals to train the prediction model. Our thorough experimental framework facilitates the evaluation of our approach alongside baseline models, particularly in the analysis of temporal eye signals and the prediction of attentional states. Notably, our SVD-based signal captures both subtle and major eye movements, including changes in the eye boundary and pupil, surpassing the limited capabilities of eye aspect ratio (EAR)-based signals. Our proposed model exhibits a 2% improvement in the overall Area Under the Receiver Operating Characteristics curve (AUROC) metric and 7% in the F1-score metric for ‘not-focus’ prediction, compared to the combination of EAR-based and computationally intensive gaze-based models used in the baseline study These contributions have potential implications for enhancing the field of attentional state prediction in online learning, offering a practical and effective solution to benefit educational experiences.

Full article

(This article belongs to the Special Issue Computer Vision and Deep Learning: Trends and Applications (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

Development and Implementation of an Innovative Framework for Automated Radiomics Analysis in Neuroimaging

by

Chiara Camastra, Giovanni Pasini, Alessandro Stefano, Giorgio Russo, Basilio Vescio, Fabiano Bini, Franco Marinozzi and Antonio Augimeri

J. Imaging 2024, 10(4), 96; https://doi.org/10.3390/jimaging10040096 - 22 Apr 2024

Abstract

Radiomics represents an innovative approach to medical image analysis, enabling comprehensive quantitative evaluation of radiological images through advanced image processing and Machine or Deep Learning algorithms. This technique uncovers intricate data patterns beyond human visual detection. Traditionally, executing a radiomic pipeline involves multiple

[...] Read more.

Radiomics represents an innovative approach to medical image analysis, enabling comprehensive quantitative evaluation of radiological images through advanced image processing and Machine or Deep Learning algorithms. This technique uncovers intricate data patterns beyond human visual detection. Traditionally, executing a radiomic pipeline involves multiple standardized phases across several software platforms. This could represent a limit that was overcome thanks to the development of the matRadiomics application. MatRadiomics, a freely available, IBSI-compliant tool, features its intuitive Graphical User Interface (GUI), facilitating the entire radiomics workflow from DICOM image importation to segmentation, feature selection and extraction, and Machine Learning model construction. In this project, an extension of matRadiomics was developed to support the importation of brain MRI images and segmentations in NIfTI format, thus extending its applicability to neuroimaging. This enhancement allows for the seamless execution of radiomic pipelines within matRadiomics, offering substantial advantages to the realm of neuroimaging.

Full article

(This article belongs to the Topic Applications in Image Analysis and Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessArticle

Scanning Micro X-ray Fluorescence and Multispectral Imaging Fusion: A Case Study on Postage Stamps

by

Theofanis Gerodimos, Ioanna Vasiliki Patakiouta, Vassilis M. Papadakis, Dimitrios Exarchos, Anastasios Asvestas, Georgios Kenanakis, Theodore E. Matikas and Dimitrios F. Anagnostopoulos

J. Imaging 2024, 10(4), 95; https://doi.org/10.3390/jimaging10040095 - 22 Apr 2024

Abstract

Scanning micrο X-ray fluorescence (μ-XRF) and multispectral imaging (MSI) were applied to study philately stamps, selected for their small size and intricate structures. The μ-XRF measurements were accomplished using the M6 Jetstream Bruker scanner under optimized conditions for spatial resolution, while the MSI

[...] Read more.

Scanning micrο X-ray fluorescence (μ-XRF) and multispectral imaging (MSI) were applied to study philately stamps, selected for their small size and intricate structures. The μ-XRF measurements were accomplished using the M6 Jetstream Bruker scanner under optimized conditions for spatial resolution, while the MSI measurements were performed employing the XpeCAM-X02 camera. The datasets were acquired asynchronously. Elemental distribution maps can be extracted from the μ-XRF dataset, while chemical distribution maps can be obtained from the analysis of the multispectral dataset. The objective of the present work is the fusion of the datasets from the two spectral imaging modalities. An algorithmic co-registration of the two datasets is applied as a first step, aiming to align the multispectral and μ-XRF images and to adapt to the pixel sizes, as small as a few tens of micrometers. The dataset fusion is accomplished by applying k-means clustering of the multispectral dataset, attributing a representative spectrum to each pixel, and defining the multispectral clusters. Subsequently, the μ-XRF dataset within a specific multispectral cluster is analyzed by evaluating the mean XRF spectrum and performing k-means sub-clustering of the μ-XRF dataset, allowing the differentiation of areas with variable elemental composition within the multispectral cluster. The data fusion approach proves its validity and strength in the context of philately stamps. We demonstrate that the fusion of two spectral imaging modalities enhances their analytical capabilities significantly. The spectral analysis of pixels within clusters can provide more information than analyzing the same pixels as part of the entire dataset.

Full article

(This article belongs to the Section Color, Multi-spectral, and Hyperspectral Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhancing Apple Cultivar Classification Using Multiview Images

by

Silvia Krug and Tino Hutschenreuther

J. Imaging 2024, 10(4), 94; https://doi.org/10.3390/jimaging10040094 - 17 Apr 2024

Abstract

Apple cultivar classification is challenging due to the inter-class similarity and high intra-class variations. Human experts do not rely on single-view features but rather study each viewpoint of the apple to identify a cultivar, paying close attention to various details. Following our previous

[...] Read more.

Apple cultivar classification is challenging due to the inter-class similarity and high intra-class variations. Human experts do not rely on single-view features but rather study each viewpoint of the apple to identify a cultivar, paying close attention to various details. Following our previous work, we try to establish a similar multiview approach for machine-learning (ML)-based apple classification in this paper. In our previous work, we studied apple classification using one single view. While these results were promising, it also became clear that one view alone might not contain enough information in the case of many classes or cultivars. Therefore, exploring multiview classification for this task is the next logical step. Multiview classification is nothing new, and we use state-of-the-art approaches as a base. Our goal is to find the best approach for the specific apple classification task and study what is achievable with the given methods towards our future goal of applying this on a mobile device without the need for internet connectivity. In this study, we compare an ensemble model with two cases where we use single networks: one without view specialization trained on all available images without view assignment and one where we combine the separate views into a single image of one specific instance. The two latter options reflect dataset organization and preprocessing to allow the use of smaller models in terms of stored weights and number of operations than an ensemble model. We compare the different approaches based on our custom apple cultivar dataset. The results show that the state-of-the-art ensemble provides the best result. However, using images with combined views shows a decrease in accuracy by 3% while requiring only 60% of the memory for weights. Thus, simpler approaches with enhanced preprocessing can open a trade-off for classification tasks on mobile devices.

Full article

(This article belongs to the Special Issue Computer Vision and Deep Learning: Trends and Applications (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessReview

Review of Image-Processing-Based Technology for Structural Health Monitoring of Civil Infrastructures

by

Ji-Woo Kim, Hee-Wook Choi, Sung-Keun Kim and Wongi S. Na

J. Imaging 2024, 10(4), 93; https://doi.org/10.3390/jimaging10040093 - 16 Apr 2024

Abstract

The continuous monitoring of civil infrastructures is crucial for ensuring public safety and extending the lifespan of structures. In recent years, image-processing-based technologies have emerged as powerful tools for the structural health monitoring (SHM) of civil infrastructures. This review provides a comprehensive overview

[...] Read more.

The continuous monitoring of civil infrastructures is crucial for ensuring public safety and extending the lifespan of structures. In recent years, image-processing-based technologies have emerged as powerful tools for the structural health monitoring (SHM) of civil infrastructures. This review provides a comprehensive overview of the advancements, applications, and challenges associated with image processing in the field of SHM. The discussion encompasses various imaging techniques such as satellite imagery, Light Detection and Ranging (LiDAR), optical cameras, and other non-destructive testing methods. Key topics include the use of image processing for damage detection, crack identification, deformation monitoring, and overall structural assessment. This review explores the integration of artificial intelligence and machine learning techniques with image processing for enhanced automation and accuracy in SHM. By consolidating the current state of image-processing-based technology for SHM, this review aims to show the full potential of image-based approaches for researchers, engineers, and professionals involved in civil engineering, SHM, image processing, and related fields.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

High Dynamic Range Image Reconstruction from Saturated Images of Metallic Objects

by

Shoji Tominaga and Takahiko Horiuchi

J. Imaging 2024, 10(4), 92; https://doi.org/10.3390/jimaging10040092 - 15 Apr 2024

Abstract

This study considers a method for reconstructing a high dynamic range (HDR) original image from a single saturated low dynamic range (LDR) image of metallic objects. A deep neural network approach was adopted for the direct mapping of an 8-bit LDR image to

[...] Read more.

This study considers a method for reconstructing a high dynamic range (HDR) original image from a single saturated low dynamic range (LDR) image of metallic objects. A deep neural network approach was adopted for the direct mapping of an 8-bit LDR image to HDR. An HDR image database was first constructed using a large number of various metallic objects with different shapes. Each captured HDR image was clipped to create a set of 8-bit LDR images. All pairs of HDR and LDR images were used to train and test the network. Subsequently, a convolutional neural network (CNN) was designed in the form of a deep U-Net-like architecture. The network consisted of an encoder, a decoder, and a skip connection to maintain high image resolution. The CNN algorithm was constructed using the learning functions in MATLAB. The entire network consisted of 32 layers and 85,900 learnable parameters. The performance of the proposed method was examined in experiments using a test image set. The proposed method was also compared with other methods and confirmed to be significantly superior in terms of reconstruction accuracy, histogram fitting, and psychological evaluation.

Full article

(This article belongs to the Special Issue Imaging Technologies for Understanding Material Appearance)

►▼

Show Figures

Figure 1

Open AccessArticle

Correlated Decision Fusion Accompanied with Quality Information on a Multi-Band Pixel Basis for Land Cover Classification

by

Spiros Papadopoulos, Georgia Koukiou and Vassilis Anastassopoulos

J. Imaging 2024, 10(4), 91; https://doi.org/10.3390/jimaging10040091 - 12 Apr 2024

Abstract

Decision fusion plays a crucial role in achieving a cohesive and unified outcome by merging diverse perspectives. Within the realm of remote sensing classification, these methodologies become indispensable when synthesizing data from multiple sensors to arrive at conclusive decisions. In our study, we

[...] Read more.

Decision fusion plays a crucial role in achieving a cohesive and unified outcome by merging diverse perspectives. Within the realm of remote sensing classification, these methodologies become indispensable when synthesizing data from multiple sensors to arrive at conclusive decisions. In our study, we leverage fully Polarimetric Synthetic Aperture Radar (PolSAR) and thermal infrared data to establish distinct decisions for each pixel pertaining to its land cover classification. To enhance the classification process, we employ Pauli’s decomposition components and land surface temperature as features. This approach facilitates the extraction of local decisions for each pixel, which are subsequently integrated through majority voting to form a comprehensive global decision for each land cover type. Furthermore, we investigate the correlation between corresponding pixels in the data from each sensor, aiming to achieve pixel-level correlated decision fusion at the fusion center. Our methodology entails a thorough exploration of the employed classifiers, coupled with the mathematical foundations necessary for the fusion of correlated decisions. Quality information is integrated into the decision fusion process, ensuring a comprehensive and robust classification outcome. The novelty of the method is its simplicity in the number of features used as well as the simple way of fusing decisions.

Full article

(This article belongs to the Special Issue Data Processing with Artificial Intelligence in Thermal Imagery)

►▼

Show Figures

Figure 1

Open AccessArticle

Automated Landmark Annotation for Morphometric Analysis of Distal Femur and Proximal Tibia

by

Jonas Grammens, Annemieke Van Haver, Imelda Lumban-Gaol, Femke Danckaers, Peter Verdonk and Jan Sijbers

J. Imaging 2024, 10(4), 90; https://doi.org/10.3390/jimaging10040090 - 11 Apr 2024

Abstract

Manual anatomical landmarking for morphometric knee bone characterization in orthopedics is highly time-consuming and shows high operator variability. Therefore, automation could be a substantial improvement for diagnostics and personalized treatments relying on landmark-based methods. Applications include implant sizing and planning, meniscal allograft sizing,

[...] Read more.

Manual anatomical landmarking for morphometric knee bone characterization in orthopedics is highly time-consuming and shows high operator variability. Therefore, automation could be a substantial improvement for diagnostics and personalized treatments relying on landmark-based methods. Applications include implant sizing and planning, meniscal allograft sizing, and morphological risk factor assessment. For twenty MRI-based 3D bone and cartilage models, anatomical landmarks were manually applied by three experts, and morphometric measurements for 3D characterization of the distal femur and proximal tibia were calculated from all observations. One expert performed the landmark annotations three times. Intra- and inter-observer variations were assessed for landmark position and measurements. The mean of the three expert annotations served as the ground truth. Next, automated landmark annotation was performed by elastic deformation of a template shape, followed by landmark optimization at extreme positions (highest/lowest/most medial/lateral point). The results of our automated annotation method were compared with ground truth, and percentages of landmarks and measurements adhering to different tolerances were calculated. Reliability was evaluated by the intraclass correlation coefficient (ICC). For the manual annotations, the inter-observer absolute difference was 1.53 ± 1.22 mm (mean ± SD) for the landmark positions and 0.56 ± 0.55 mm (mean ± SD) for the morphometric measurements. Automated versus manual landmark extraction differed by an average of 2.05 mm. The automated measurements demonstrated an absolute difference of 0.78 ± 0.60 mm (mean ± SD) from their manual counterparts. Overall, 92% of the automated landmarks were within 4 mm of the expert mean position, and 95% of all morphometric measurements were within 2 mm of the expert mean measurements. The ICC (manual versus automated) for automated morphometric measurements was between 0.926 and 1. Manual annotations required on average 18 min of operator interaction time, while automated annotations only needed 7 min of operator-independent computing time. Considering the time consumption and variability among observers, there is a clear need for a more efficient, standardized, and operator-independent algorithm. Our automated method demonstrated excellent accuracy and reliability for landmark positioning and morphometric measurements. Above all, this automated method will lead to a faster, scalable, and operator-independent morphometric analysis of the knee.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

Subjective Straylight Index: A Visual Test for Retinal Contrast Assessment as a Function of Veiling Glare

by

Francisco J. Ávila, Pilar Casado, Mª Concepción Marcellán, Laura Remón, Jorge Ares, Mª Victoria Collados and Sofía Otín

J. Imaging 2024, 10(4), 89; https://doi.org/10.3390/jimaging10040089 - 10 Apr 2024

Abstract

Spatial aspects of visual performance are usually evaluated through visual acuity charts and contrast sensitivity (CS) tests. CS tests are generated by vanishing the contrast level of the visual charts. However, the quality of retinal images can be affected by both ocular aberrations

[...] Read more.

Spatial aspects of visual performance are usually evaluated through visual acuity charts and contrast sensitivity (CS) tests. CS tests are generated by vanishing the contrast level of the visual charts. However, the quality of retinal images can be affected by both ocular aberrations and scattering effects and none of those factors are incorporated as parameters in visual tests in clinical practice. We propose a new computational methodology to generate visual acuity charts affected by ocular scattering effects. The generation of glare effects on the visual tests is reached by combining an ocular straylight meter methodology with the Commission Internationale de l’Eclairage’s (CIE) general disability glare formula. A new function for retinal contrast assessment is proposed, the subjective straylight function (SSF), which provides the maximum tolerance to the perception of straylight in an observed visual acuity test. Once the SSF is obtained, the subjective straylight index (SSI) is defined as the area under the SSF curve. Results report the normal values of the SSI in a population of 30 young healthy subjects (19 ± 1 years old), a peak centered at SSI = 0.46 of a normal distribution was found. SSI was also evaluated as a function of both spatial and temporal aspects of vision. Ocular wavefront measures revealed a statistical correlation of the SSI with defocus and trefoil terms. In addition, the time recovery (TR) after induced total disability glare and the SSI were related; in particular, the higher the RT, the greater the SSI value for high- and mid-contrast levels of the visual test. No relationships were found for low contrast visual targets. To conclude, a new computational method for retinal contrast assessment as a function of ocular straylight was proposed as a complementary subjective test for visual function performance.

Full article

(This article belongs to the Special Issue Advances in Retinal Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Multi-View Gait Analysis by Temporal Geometric Features of Human Body Parts

by

Thanyamon Pattanapisont, Kazunori Kotani, Prarinya Siritanawan, Toshiaki Kondo and Jessada Karnjana

J. Imaging 2024, 10(4), 88; https://doi.org/10.3390/jimaging10040088 - 09 Apr 2024

Abstract

A gait is a walking pattern that can help identify a person. Recently, gait analysis employed a vision-based pose estimation for further feature extraction. This research aims to identify a person by analyzing their walking pattern. Moreover, the authors intend to expand gait

[...] Read more.

A gait is a walking pattern that can help identify a person. Recently, gait analysis employed a vision-based pose estimation for further feature extraction. This research aims to identify a person by analyzing their walking pattern. Moreover, the authors intend to expand gait analysis for other tasks, e.g., the analysis of clinical, psychological, and emotional tasks. The vision-based human pose estimation method is used in this study to extract the joint angles and rank correlation between them. We deploy the multi-view gait databases for the experiment, i.e., CASIA-B and OUMVLP-Pose. The features are separated into three parts, i.e., whole, upper, and lower body features, to study the effect of the human body part features on an analysis of the gait. For person identity matching, a minimum Dynamic Time Warping (DTW) distance is determined. Additionally, we apply a majority voting algorithm to integrate the separated matching results from multiple cameras to enhance accuracy, and it improved up to approximately 30% compared to matching without majority voting.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

►▼

Show Figures

Graphical abstract

Open AccessArticle

Measuring Effectiveness of Metamorphic Relations for Image Processing Using Mutation Testing

by

Fakeeha Jafari and Aamer Nadeem

J. Imaging 2024, 10(4), 87; https://doi.org/10.3390/jimaging10040087 - 06 Apr 2024

Abstract

►▼

Show Figures

Testing an intricate plexus of advanced software system architecture is quite challenging due to the absence of test oracle. Metamorphic testing is a popular technique to alleviate the test oracle problem. The effectiveness of metamorphic testing is dependent on metamorphic relations (MRs). MRs

[...] Read more.

Testing an intricate plexus of advanced software system architecture is quite challenging due to the absence of test oracle. Metamorphic testing is a popular technique to alleviate the test oracle problem. The effectiveness of metamorphic testing is dependent on metamorphic relations (MRs). MRs represent the essential properties of the system under test and are evaluated by their fault detection rates. The existing techniques for the evaluation of MRs are not comprehensive, as very few mutation operators are used to generate very few mutants. In this research, we have proposed six new MRs for dilation and erosion operations. The fault detection rate of six newly proposed MRs is determined using mutation testing. We have used eight applicable mutation operators and determined their effectiveness. By using these applicable operators, we have ensured that all the possible numbers of mutants are generated, which shows that all the faults in the system under test are fully identified. Results of the evaluation of four MRs for edge detection show an improvement in all the respective MRs, especially in MR1 and MR4, with a fault detection rate of 76.54% and 69.13%, respectively, which is 32% and 24% higher than the existing technique. The fault detection rate of MR2 and MR3 is also improved by 1%. Similarly, results of dilation and erosion show that out of 8 MRs, the fault detection rates of four MRs are higher than the existing technique. In the proposed technique, MR1 is improved by 39%, MR4 is improved by 0.5%, MR6 is improved by 17%, and MR8 is improved by 29%. We have also compared the results of our proposed MRs with the existing MRs of dilation and erosion operations. Results show that the proposed MRs complement the existing MRs effectively as the new MRs can find those faults that are not identified by the existing MRs.

Full article

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Applied Sciences, Computation, Entropy, J. Imaging

Color Image Processing: Models and Methods (CIP: MM)

Topic Editors: Giuliana Ramella, Isabella TorcicolloDeadline: 30 July 2024

Topic in

Applied Sciences, Sensors, J. Imaging, MAKE

Applications in Image Analysis and Pattern Recognition

Topic Editors: Bin Fan, Wenqi RenDeadline: 31 August 2024

Topic in

Applied Sciences, Electronics, J. Imaging, MAKE, Remote Sensing

Computational Intelligence in Remote Sensing: 2nd Edition

Topic Editors: Yue Wu, Kai Qin, Maoguo Gong, Qiguang MiaoDeadline: 31 December 2024

Conferences

Special Issues

Special Issue in

J. Imaging

Advances and Challenges in Multimodal Machine Learning 2nd Edition

Guest Editor: Georgina CosmaDeadline: 30 April 2024

Special Issue in

J. Imaging

Modelling of Human Visual System in Image Processing

Guest Editors: Alexey Mashtakov, Edoardo ProvenziDeadline: 24 May 2024

Special Issue in

J. Imaging

The Mixed Reality Revolution: Challenges and Prospects 2nd Edition

Guest Editors: Sébastien Mavromatis, Jean SequeiraDeadline: 31 May 2024

Special Issue in

J. Imaging

Advances and Challenges in Multimodal Machine Learning

Guest Editor: Georgina CosmaDeadline: 30 June 2024